Table of Contents

- How Deepfakes Infiltrate Live Video Interviews

- The Rise of Proxy Interview Fraud in Remote Hiring

- North Korean IT Workers Target US Companies Through Interview Fraud

- The Scale of Video Interview Fraud in 2026

- Red Flags Recruiters Miss During Video Interviews

- Why Traditional Background Checks Fail to Catch Interview Fraud

- The Financial and Security Costs of Hiring a Fraudulent Candidate

- How Tofu Detects Video Interview Fraud Across Your Hiring Funnel

- Final Thoughts on Defending Against Interview Fraud

- FAQs

Most companies find out they hired a fraudulent candidate the same way: an access audit after something breaks, a tip from another employee, or a call from law enforcement. By then, that person has been inside your systems for weeks or months. Video interview fraud works because your hiring funnel treats each stage as independent, and no one is checking whether the person who applied is the same person who interviewed or the same person who showed up on day one. The verification gap isn't theoretical anymore.

TLDR:

- Deepfake tech and proxy interviewers now infiltrate video calls using off-the-shelf tools. 24% of millennial managers have already encountered them during interviews.

- North Korean IT workers systematically use stolen identities and AI manipulation to land remote jobs at US companies—security officials say every Fortune 500 has hired one.

- 41% of IT and fraud leaders report their company hired and onboarded a fraudulent candidate who passed traditional background checks.

- Tofu's DeepDetect analyzes lip sync, eye movement, and voice patterns in real time during Zoom/Teams calls to catch deepfakes and proxy swaps across interview stages.

How Deepfakes Infiltrate Live Video Interviews

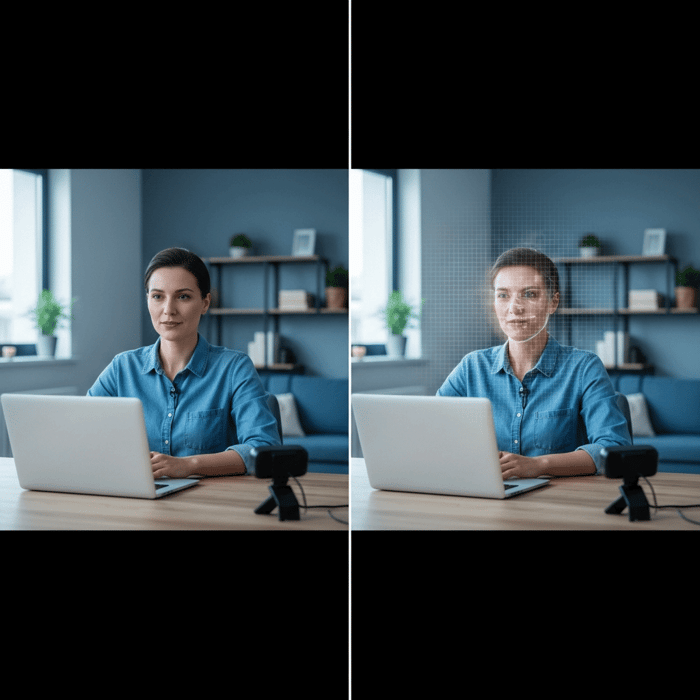

The mechanics are simpler than most recruiters expect. A bad actor opens a virtual camera app, layers a pre-trained face model over their webcam feed, and pipes that synthetic output into Zoom or Google Meet. The face moves, the lips sync, the voice, cloned from publicly available audio, matches. To the recruiter on the other side, nothing looks wrong.

This stopped being a niche hacker technique years ago. Off-the-shelf tools have made face-swapping and voice cloning accessible to anyone willing to spend an afternoon on GitHub. That accessibility is what makes the threat so hard to dismiss. 24% of millennial managers have already encountered deepfake tech during video interviews, meaning your team has likely already seen one without knowing it.

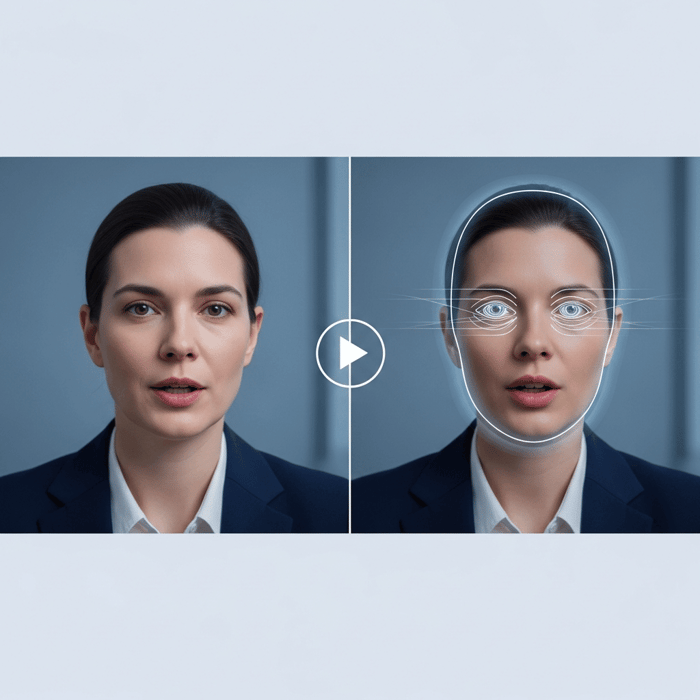

What makes real-time deepfakes particularly effective is that most detection instincts are trained on static images, not live video. A recruiter scanning for "something off" in a 30-minute call is outmatched. The artifacts are subtle: slight lip-sync lag, unnatural eye movement during fast speech, facial edges that soften under motion. Easy to explain away. Easy to miss.

Human perception was never built for this.

The Rise of Proxy Interview Fraud in Remote Hiring

Deepfakes get most of the headlines, but some of the cleanest fraud cases involve no AI at all. A candidate hires a more qualified stand-in, that person completes the video interview, and the actual hire shows up on day one as someone you've never spoken to. This is proxy interview fraud, and it has matured into something closer to a service industry.

This is proxy interview fraud, and it has matured into something closer to a service industry. Forums and Telegram channels now openly advertise professional interviewers for hire, with listings that target remote technical roles where there's no in-person verification step to blow the cover.

The bait-and-switch works because most hiring processes treat each interview stage as isolated. Someone passes the phone screen, clears the technical round, and sails through the final loop. No one cross-checks whether the same person appeared on each call. That gap is exactly what proxy services exploit.

Remote hiring created the opening. The market filled it fast.

North Korean IT Workers Target US Companies Through Interview Fraud

State-sponsored fraud operates at a different level of sophistication than opportunistic proxy use or freelance deepfake schemes.

North Korean IT workers have been systematically infiltrating US tech companies for years, using stolen identities, VPNs, and AI-assisted video manipulation to clear remote interviews and land legitimate jobs. Once inside, they funnel wages back to the regime and retain access to codebases, internal tooling, and sensitive infrastructure. The insider threat risk is not theoretical.

The scale is staggering. Nine security officials told Axios they've never met a Fortune 500 company that hasn't inadvertently hired a North Korean IT worker. Not "some companies." Not "companies in high-risk sectors." Every major company.

What makes these operatives so effective is discipline. They study job descriptions, build credible LinkedIn histories, and coach each other through technical screens. The interview performance is rehearsed. The resume is constructed. By the time a recruiter flags something as "off," an offer letter is already drafted.

The Scale of Video Interview Fraud in 2026

41% of IT and fraud leaders say their company has already hired and onboarded a fraudulent candidate. Not suspected. Not flagged. Hired and onboarded.

That stat covers organizations with dedicated security teams, fraud awareness, and formal hiring processes. Companies without those functions are almost certainly faring worse.

The industries absorbing the most damage track exactly where the money and access are: fintech, crypto, healthcare, and infrastructure SaaS. Remote-first technical roles are the primary entry point, not because bad actors prefer them aesthetically, but because they offer the fewest verification checkpoints between application and day one.

What changed between 2023 and now is not the existence of the fraud. It's the volume, organization, and infrastructure. Isolated incidents became coordinated campaigns. Individual bad actors became networks.

Red Flags Recruiters Miss During Video Interviews

Most recruiters know something feels wrong before they can name it. The challenge is that "something feels off" doesn't hold up in a debrief. These signals do.

Visual and Technical Tells

- Lip movement that lags slightly behind speech, especially noticeable during fast or complex sentences

- Facial edges that blur or soften during head turns or quick motion

- Eyes that track unnaturally, often moving in rehearsed patterns instead of the organic drift of someone actively thinking

- Lighting that stays perfectly consistent regardless of movement, a side effect of AI overlay instead of a real environment

Behavioral Tells

- Candidates who glance off-screen repeatedly before answering, not to think, but to read

- Reluctance to turn on video, or sudden technical "issues" when asked to reposition the camera

- Answers that sound rehearsed to a specific question but fall apart under follow-up

- Strong performance on expected interview questions, then visible hesitation when conversation goes off-script

- Resume details the candidate can't substantiate: dates they stumble on, employer names they mispronounce, projects they can't walk through at a code level

The Cross-Stage Consistency Problem

A single interview doesn't reveal much. The pattern across stages does. A proxy interviewer who handles the technical screen may not be the same person on the final call. Small changes in speech cadence, vocabulary, or even humor register as jarring to a sharp interviewer, but rarely get written down as a fraud signal.

If you can't confirm the same person appeared in every stage of the process, you can't confirm who you're actually hiring.

Human intuition catches fragments. Without a system behind it, those fragments get dismissed.

Fraud Type | Visual & Technical Tells | Behavioral Patterns | Detection Method |

|---|---|---|---|

Deepfake Video Fraud | Lip movement lag during fast speech, facial edges blur during head turns, unnaturally consistent lighting regardless of movement, eyes track in rehearsed patterns instead of organic drift | Reluctance to reposition camera, sudden technical issues when asked for different angles, strong performance on scripted questions but visible confusion on follow-ups | Real-time analysis of lip sync accuracy, eye movement patterns, facial construction consistency, and generative AI overlay artifacts during live video calls |

Proxy Interview Fraud | Different speech cadence or vocabulary across interview stages, noticeable changes in humor or communication style between rounds, camera angle or background changes that suggest different physical locations | Glancing off-screen repeatedly before answering technical questions, inability to substantiate resume details like project timelines or employer names, rehearsed answers that fall apart under impromptu follow-up questions | Cross-stage identity verification tracking the same person across phone screen, technical round, and final interviews using biometric consistency analysis |

DPRK IT Worker Fraud | VPN-masked IPs that don't match stated location, device fingerprints showing access from multiple geographic regions, time zone inconsistencies in availability patterns | Overly polished LinkedIn histories with minimal genuine social engagement, references that route to dedicated handlers instead of real colleagues, reluctance to provide government-issued ID for verification | Multi-signal screening across resume metadata, social account ownership validation, IP geolocation analysis, device fingerprinting, and cross-reference against proprietary fraud databases of known DPRK IT worker patterns |

Stolen Identity Fraud | Mismatch between video appearance and profile photos across platforms, inconsistent age presentation between stated work history timeline and visual appearance, documents that pass verification but don't match the person on camera | Cannot recall specific details from stated employment history, mispronounces former employer names or industry terminology, struggles with dates and timelines that should be automatic recall | Identity validation against 4 billion+ data points, cross-site profile consistency checking, document authentication combined with live video biometric matching |

Why Traditional Background Checks Fail to Catch Interview Fraud

Background checks verify history. They don't verify identity.

A synthetic candidate with a constructed employment history, a cloned LinkedIn, and a stolen SSN will pass most standard checks cleanly. The references are scripted. The credentials are real, just not theirs. Nothing in a traditional verification workflow is designed to ask whether the person on the video call is the same person who submitted the application.

That's the gap. Coordinated fraud networks, especially state-sponsored ones, are built to fit inside it.

Where the Verification Chain Breaks

There are three points where standard background checks provide no meaningful signal against video interview fraud:

- Employment history validation confirms that a job existed and that someone held it, not that the candidate in front of you was that person. A stolen work history passes every ATS screen and third-party verification call.

- Reference checks are trivially easy to fake. Fraud rings assign dedicated "reference handlers" who answer calls, speak to fabricated roles, and confirm details that match the planted resume exactly.

- SSN trace and criminal background checks authenticate a document, not a human being. When the underlying identity is stolen from a real person, the check returns clean results by design.

The Financial and Security Costs of Hiring a Fraudulent Candidate

The cost of a bad hire is usually framed as wasted recruiting time. That math understates the problem by an order of magnitude.

A fraudulent hire carries access from day one. They're inside your codebase, your internal tooling, your Slack channels, your customer data. A North Korean IT worker operating inside a fintech company isn't collecting a paycheck. They're mapping your infrastructure. That access doesn't expire when you catch the fraud. It expires when you've audited every system they touched, rotated every credential they held, and notified every customer whose data was in scope.

Regulatory exposure follows. OFAC violations for unknowingly hiring sanctioned individuals carry penalties reaching into the millions, regardless of intent.

- Average cost of a data breach: $4.88 million (IBM, 2024)

- OFAC civil penalties: up to $1.9 million per violation

- Recruiting, onboarding, and lost productivity from a single bad hire: typically 3-5x the role's annual salary

The security cost compounds because fraudulent hires rarely act in isolation. One insider who exfiltrates your API architecture hands the next attacker a map. The breach you're investigating today may have started with a resume you approved six months ago.

How Tofu Detects Video Interview Fraud Across Your Hiring Funnel

Fraud slips through because detection stops at the application. Tofu covers the full funnel.

FraudDetect screens every applicant the moment they hit the ATS, running 40+ signals across resume metadata, social account ownership, IP location, device fingerprinting, and location consistency. It validates identity against 4 billion+ data points and cross-references Tofu's proprietary Fraudbase of 5M+ analyzed profiles. Synthetic identities, stolen credentials, and DPRK IT worker patterns get flagged before a recruiter ever opens the file.

DeepDetect picks up where FraudDetect leaves off. It monitors live video interviews in real time, analyzing lip sync, eye movement, facial construction, voice patterns, and signs of generative AI overlay inside Zoom, Teams, and Google Meet. It also tracks identity consistency across stages, catching proxy swaps that only become visible when you compare round one to round three.

No other solution does both. Background check vendors verify history. Liveness checks cover a single session. Tofu verifies that the person who applied is the person who interviewed is the person you're about to hire.

Final Thoughts on Defending Against Interview Fraud

The threat isn't coming. It's already inside your hiring funnel. Video interview fraud succeeded because most companies still treat each interview stage as isolated, with no cross-verification between rounds. Your recruiters aren't equipped to catch deepfakes in real time, and your background checks don't verify that the person who applied is the person who interviewed. Book time with us if you want to close that gap before your next bad hire lands admin access to production. The cost of waiting is measured in breach notifications, not recruiting hours.